Have you noticed your YouTube recommendations looking a little... weird lately? You're not alone.

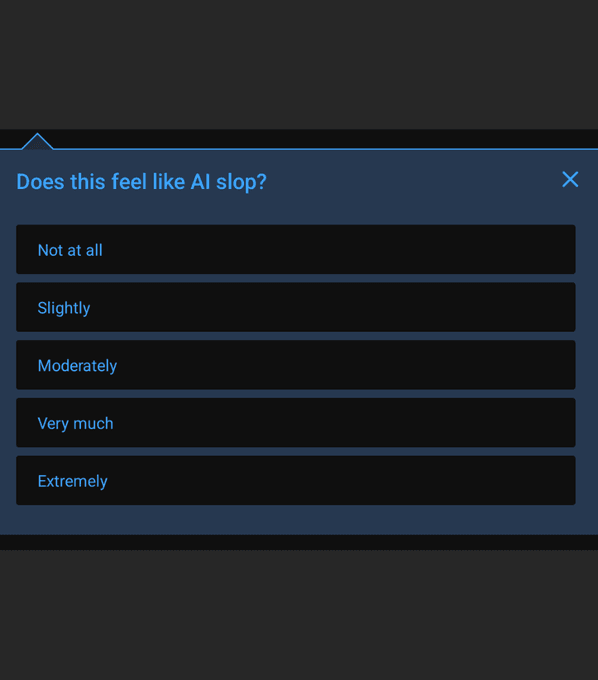

To combat a rising tide of low-effort, generated garbage, YouTube is testing a brand-new rating feature. It asks viewers a surprisingly blunt question: Does the video you just watched feel like "AI slop?"

The Fight Against Automated Content

We all know the videos. You click on a historical documentary only to hear a glitchy, robotic voice reading a Wikipedia page, or you stumble across bizarre, AI-generated children's animations that make zero narrative sense.

Until recently, automated moderation tools struggled to tell the difference between a clever use of AI and pure spam. Now, YouTube is putting viewers on the front lines to fix the problem.

By explicitly using the term "AI slop" in its testing prompts, the company is finally calling out mass-produced, low-value media for what it is. Asking the audience adds a much-needed layer of human common sense that pure algorithmic moderation lacks.

When viewers flag these weird, artificially hollow videos, their immediate feedback trains YouTube’s internal systems to spot the garbage faster. It essentially turns the everyday audience into a highly effective quality assurance team.

Impact on Video Discovery

So, what happens when a video gets slapped with the "slop" label? It tanks in the algorithm. Videos consistently flagged by the community will rapidly lose visibility in search results and recommended feeds.

For actual human creators, this is fantastic news. If you spend forty hours scripting, filming, and editing a genuine video, you shouldn't have to compete with a bot account uploading fifty generated clips a day.

Burying the automated noise pushes original, high-effort production back to the top where it belongs. By trusting real people to sniff out the spam, YouTube is drawing a hard line to protect the creators who actually make the platform worth watching.