Google Just Killed the LLM Knowledge Cutoff for Devs

Relying on Large Language Models (LLMs) for coding has always had a glaring flaw: the "knowledge cutoff." We’ve all been there. You ask an AI for a Firebase implementation, and it spits out a library that’s been deprecated for eighteen months. It’s a productivity sink. Until now, the only workaround was a messy routine of manual copy-pasting or writing fragile web-scraping scripts to give the AI some context.

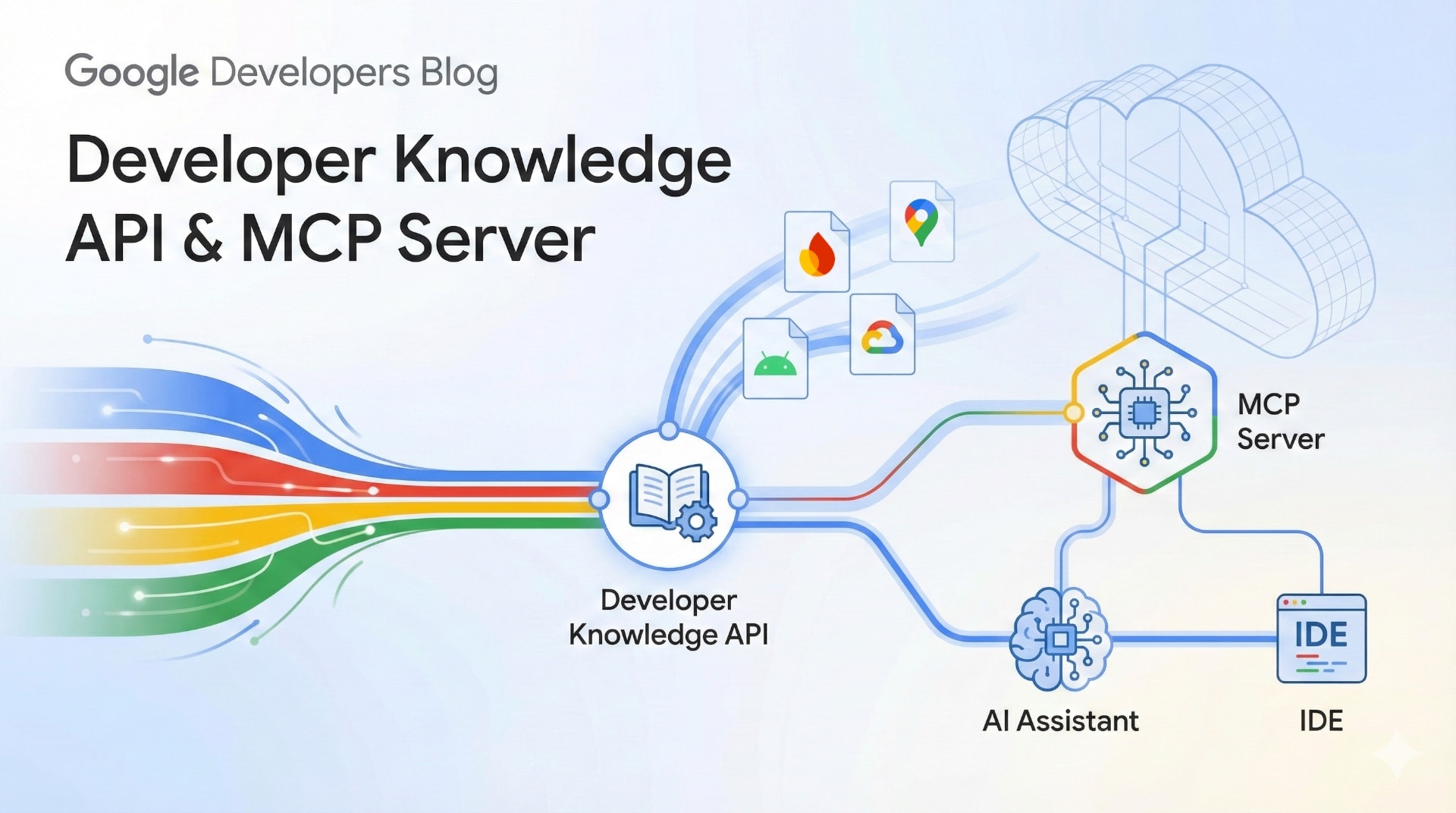

Google’s new Developer Knowledge API and Model Context Protocol (MCP) server change the math. This isn't just another documentation site update. It’s an attempt to turn Google’s technical library into a queryable, live data stream for AI-assisted engineering. By moving documentation into a programmatic format, Google is finally treating docs like code rather than a static reading list.

The real win here is the death of "stale" guidance. Instead of hoping a model has been trained on the latest Android SDK changes, developers can point their tools at a source that updates daily. It cuts the friction of context switching and stops those hallucinated function calls before they end up in your commit.

The API: A Search Engine That Speaks Markdown

The Developer Knowledge API functions as a specialized engine for Google’s massive technical stack. It covers the heavy hitters: firebase.google.com, developer.android.com, and docs.cloud.google.com. Most search engines return HTML designed for human eyes, which is notoriously difficult for AI to parse cleanly. This API returns Markdown. It’s the native language of LLMs, making it perfect for injection into a prompt.

Freshness is the metric that matters. In this public preview, Google re-indexes its docs within 24 hours of an update. Let's be cynical for a second: 24 hours isn't "instant." If a zero-day patch or a breaking change drops at 9:00 AM, your AI assistant might still be behind until tomorrow. But compared to an AI model with a knowledge cutoff from two years ago? It’s a revelation. For teams on the bleeding edge of cloud infra, a 24-hour window is a massive upgrade over the status quo.

It’s also about precision. You don't need to feed an AI a 50-page guide to fix one function. The API supports snippet extraction, allowing your tools to pull exactly what they need. This saves tokens and keeps the AI focused on the task at hand rather than drowning in irrelevant documentation.

Connecting the Pipes with MCP

The API is the data source, but the Model Context Protocol (MCP) is the delivery truck. MCP is an open standard designed to break down the "data silos" that keep AI models isolated from your local tools. By releasing an official MCP server, Google has built a secure bridge that lets AI assistants in VS Code or your terminal "read" documentation in real-time.

This setup transforms a generic chatbot into a Google-specific expert. If you ask, "What’s the best way to handle Firebase push notifications?" the assistant doesn't guess based on its training data. It performs a lookup. It fetches the current Markdown from the source to build its answer.

ApiNotActivatedMapError, the assistant can query the docs to find the exact configuration step you missed in the console. It can even handle comparative architecture reviews—like weighing Cloud Run against Cloud Functions—by analyzing the current feature sets of both services simultaneously. It’s context, delivered exactly when the developer needs it.Implementation and Workflow Integration

Setting this up is straightforward, though it still requires the usual GCP dance. You start by creating and restricting an API key in the Google Cloud Console. Security is the focus here; you don't want an open pipe to your project data, so the key is scoped specifically to the Developer Knowledge API.

gcloud beta services mcp enable developerknowledge.googleapis.com --project=PROJECT_ID—turns on the service. From there, you just update your tool’s configuration, like mcp_config.json, to point at the server. Once it’s live, the assistant calls the API autonomously whenever you ask a documentation-related question.We’re already seeing this in the Gemini CLI. Developers are using "hooks" to pipe official documentation directly into their terminal prompts. It kills the need to hunt through twenty browser tabs to verify a parameter. The "latest truth" is always one command away.

The Road Toward Agentic Workflows

This public preview is just the foundational plumbing. Right now, the service delivers high-quality Markdown, but the roadmap includes structured content. Future updates will introduce specific objects for code samples and API entities. This will allow AI agents to distinguish between a conceptual explanation and a functional code block with much higher precision.

Google is also pushing to shrink that 24-hour re-indexing window. As the gap between a documentation commit and its availability in the API approaches zero, "agentic workflows" become possible. We’re talking about AI agents that monitor documentation for updates and automatically suggest PRs to fix deprecated SDK calls in your codebase.

The wall between a developer’s intent and the official technical truth is finally starting to crumble. By treating documentation as a queryable, fresh, and programmatic resource, Google is providing the infrastructure for a more reliable development lifecycle. The "Documentation as Code" philosophy has finally caught up to the AI-first world.