Anthropic just flipped the switch on interactive visual explanations for every Claude user, including those riding the free tier. It is a fundamental rewiring of how the AI actually talks to us about hard concepts.

Gone are the days of staring at a wall of text while trying to mentally map out a neural network. Claude now spits out dynamic, interactive UI components directly into the chat window.

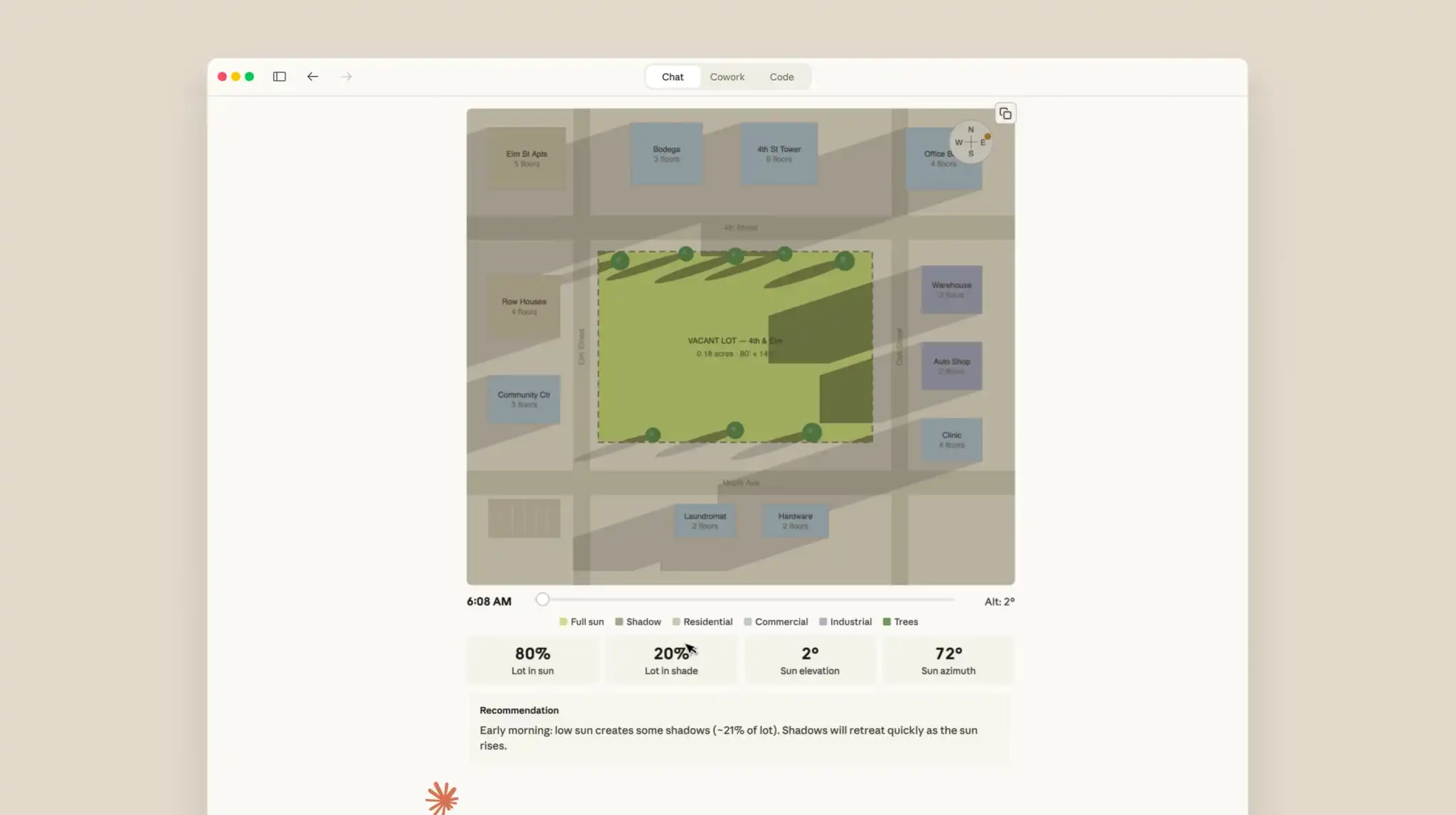

Text alone has always been a terrible way to explain spatial logic or software architecture. Now, when you ask a structural question, you get a custom-built interface to play with right next to the written answer.

Visual Generation Replaces Text-Heavy Responses

Watch what happens when you ask Claude to explain how a React state updates. Instead of dumping five paragraphs of jargon, the bot spins up a working, interactive SVG diagram right in its Artifacts panel.

You can actually click through the component lifecycle or drag a slider to see how data flows change in real-time. It cuts out the mental gymnastics usually required to translate AI text into a functional mental model.

Hovering over a specific node isolates that part of the system, letting you drill down into exactly what breaks when an API call fails. It feels less like reading an encyclopedia and more like pair programming with a very patient tutor.

Strategic Rollout to Free Tier Users

Giving this away to free users is an incredibly aggressive flex from Anthropic. They clearly want to lock down the market for developers and students who might otherwise bounce between competing bots.

Until now, spinning up custom, interactive UI components on the fly was heavily gated behind $20-a-month paywalls on rival platforms. Breaking that barrier suddenly makes plain-text AI feel like a relic from 2023.

Flooding the free tier also means Anthropic vacuums up an immense amount of interaction data on how regular people use these visual tools. OpenAI and Google will likely have to scramble to match this baseline just to stay relevant.

Enterprise and Educational Impact

For software teams, the utility is immediate and obvious. An engineer can ask Claude to map out a microservices migration and instantly get a clickable architecture map rather than a bulleted list of Docker containers.

Students get a similar upgrade, trading static textbook charts for interactive models. A biology major can now drag a slider to watch a custom-animated representation of cellular mitosis generated entirely on the fly.

We have officially hit the ceiling of what text-only reasoning can do for consumers. If an AI assistant in 2026 can't show you its work with a working visual interface, it simply isn't competitive.